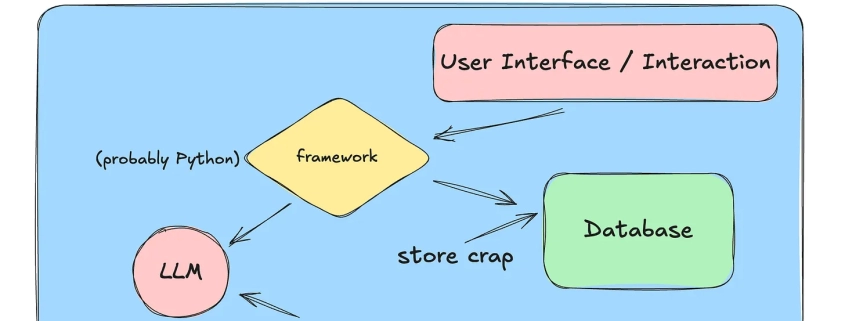

Things have changed a lot in the last year related to LLMs and AI; on the one hand, it seems the AI skeptics for coding are increasingly confined to the corners of the internet. Everyone is dancing around in the middle, not sure of where everything should fall. Clearly, if we don’t use AI at all, we will become coding dinosaurs. But a sea of junior devs relying too much on Cursor has created a knowledge crisis, and demand for Senior+ devs has skyrocketed.

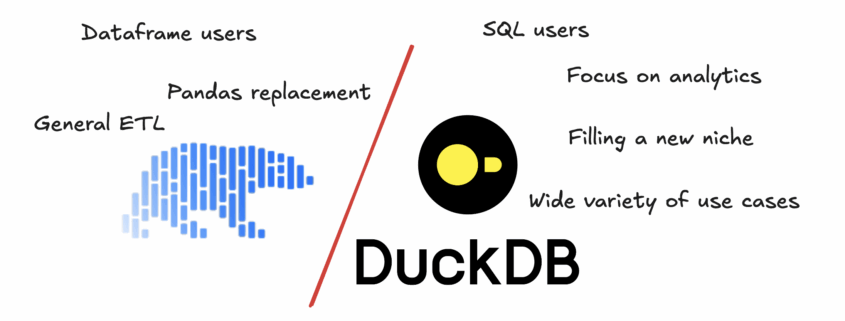

So, the classic newbie question. DuckDB vs Polars, which one should you pick?

This is an interesting question, and actually drives a lot of search traffic to this website on which you find yourself wasting time. I thank you for that.

This is probably the most classic type of question that all developers eventually ask at some point in their sad and depressing lives. Isn’t that the same story that is as old as time? This stick is better than that rock. Rust is better than C. Databricks better than Snowflake. You know, Delta Lake better than Iceberg.

And so the world keeps turning and grinding away.

DuckDB vs Polars? That’s the wrong question.

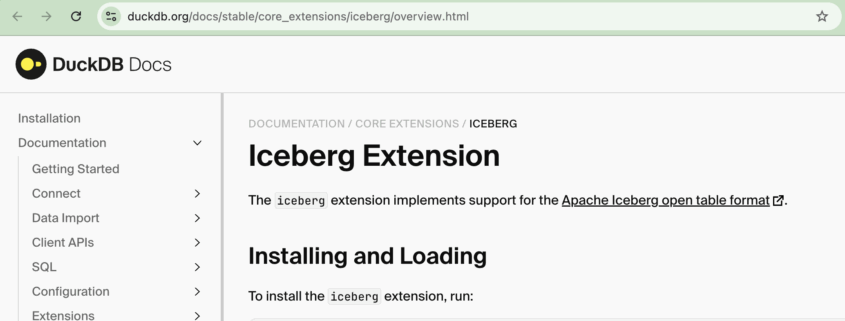

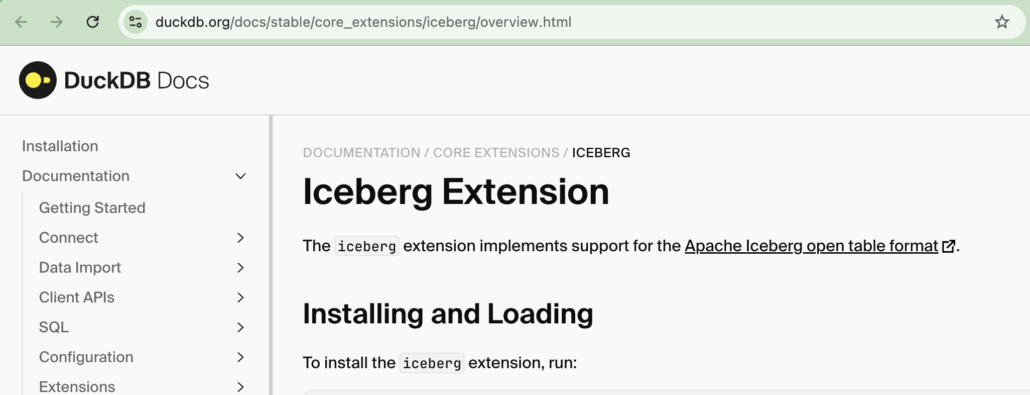

Well, all the bottom feeders (Iceberg and DuckDB users) are howling at the moon and dancing around a bonfire at midnight trying to cast their evil spells on the rest of us. Apache Iceberg writes with DuckDB? Better late than never I suppose.

Your witchy ways won’t work on me.

Not going to lie, Iceberg writes with MotherDuck is an interesting concept. MotherDuck is lit and Iceberg only puts a little ice on the fire.

Many other tools like Polars or Daft have been offering Iceberg writes for ages now, it’s about time DuckDB preened its feathers and added write support. Up until now the DuckDB Iceberg Extension has all about the read. But, that is pretty much good for HelloWorld() crap pumped and dumped on Redditors.

We need write support in the real production world. Oh, and not on some Iceberg table stored on your laptop you ninny.

So, you’re just a regular old Data Engineer crawling along through the data muck, barley keeping your head above the bits and bytes threatening to drown you. At point in time you were full of spit and vinegar and enjoyed understanding and playing with every nuance known to man.

But, not you are old and wizened, exhausted with the never ending stream of JIRA tickets from which you can never get ahead. You write lots of Spark jobs, consider yourself a PySpark pipeline writing expert … but when it comes to Spark performance tuning and optimizations? That’s for the birds.

Well my friend, don’t let all the Scala experts look down on you, scare you into thinking Spark performance is simply to complex for the common developer. Liars, every last mother one of them.

Ok, not going to lie, I rarely find anything of value in the dregs of r/dataengineering, mostly I fear, because it’s %90 freshers with little to no experience. These green behind the ear know-it-all engineers who’ve never written a line of Perl, SSH’d into a server, and have no idea what a LAMP stack is. Weak. Sad.

We used to program our way to glory, up hill both ways in the snow. All you do is script kiddy some Python code through Cursor.

A recent post on Data Modeling, specifically that data modeling is dead, caught my eye. A rare piece of gold mixed in the usual pile of crap. It some truth being spoken on the interwebs, hold onto your panties you bright eyed data zealot. I agree %100 with this sentiment.

DATA MODELING IS DEAD.

Did you know that Polars, that Rust based DataFrame tool that is one the fastest tools on the market today, just got faster?? There is now GPU execution on available on Polars that makes it 70% faster than before!!

I don’t know about you, but I grew up and cut my teeth in what feels like a special and Golden age of software engineering that is now relegated to the history books, a true onetime Renaissance of coding that was beautiful, bright, full of laughter and wonder, a time which has passed and will never return.

Or will it?

SQLMesh is an open-source framework for managing, versioning, and orchestrating SQL-based data transformations.

It’s in the same “data transformation” space as dbt, but with some important design and workflow differences.

What SQLMesh Is

SQLMesh is a next-generation data transformation framework designed to ship data quickly, efficiently, and without error. Data teams can efficiently run and deploy data transformations written in SQL or Python with visibility and control at any size.

So … what you are telling me is that it’s dbt … but with Python? Interesting enough concept, I should say. One would have to surmise that most people using SQLMesh would be using … SQL! Look at how smart I am.

You know, after literally multiple decades in the data space, writing code and SQL, at some point along that arduous journey, one might think this problem would be solved by me, or the tooling … yet alas, not to be.

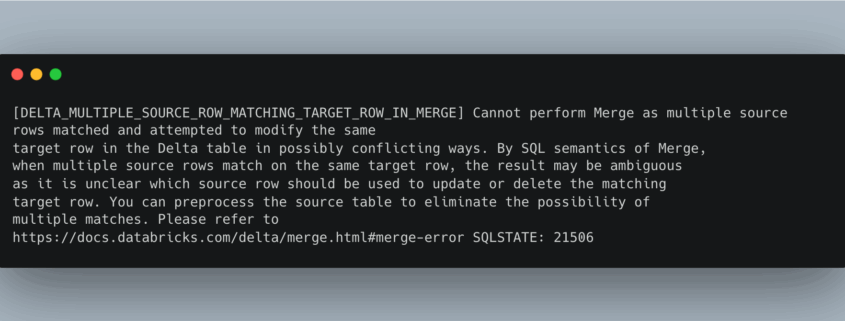

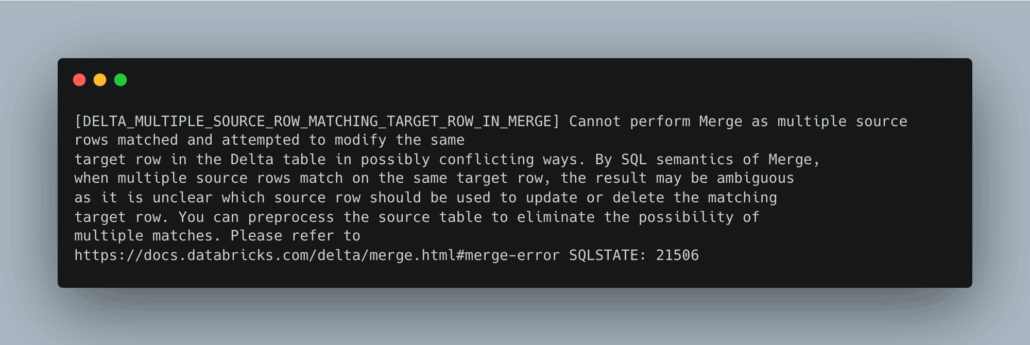

Regardless of the industry or tools used, such as Pandas, Spark, or Postgres, duplicates are a common issue in pipelines, and SQL remains the most classic and iconic problem. Things just never change, and humans never learn their lessons, at least I don’t.

Deletion Vectors are a soft‑delete mechanism in Delta Lake that enables Merge‑on‑Read (MoR) behavior, letting update/delete/merge operations mark row positions as removed without rewriting the underlying Parquet files. This contrasts with the older Copy‑on‑Write (CoW) model, where even a single deleted record triggers rewriting of entire files YouTube+8docs.delta.io+8Medium+8.

Supported since Delta Lake 2.3 (read-only), full deletion vector support for DELETE/UPDATE/MERGE appeared in later versions: DELETE in 2.4, UPDATE/MERGE in Delta 3.x Miles Cole+4docs.delta.io+4delta.io+4.

✅ Why Use Deletion Vectors?

-

Faster small changes: Only binary bitmap metadata is written, rather than rewriting large Parquet files.

-

Write efficiency: Particularly efficient when changes affect sparse rows across many files Medium+11delta.io+11Towards AI+11.

-

ACID semantics preserved: Readers still get the correct view by merging with DLV metadata at read time.

However:

-

Read-time overhead: Filtering DLV metadata adds overhead during queries.

-

Maintenance needed: Unapplied deletion markers build up until compaction or purge Medium+6japila-books+6Towards AI+6.

Interesting links

Here are some interesting links for you! Enjoy your stay :)Categories

Archive

- February 2026

- January 2026

- December 2025

- November 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- February 2021

- January 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- January 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- May 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018