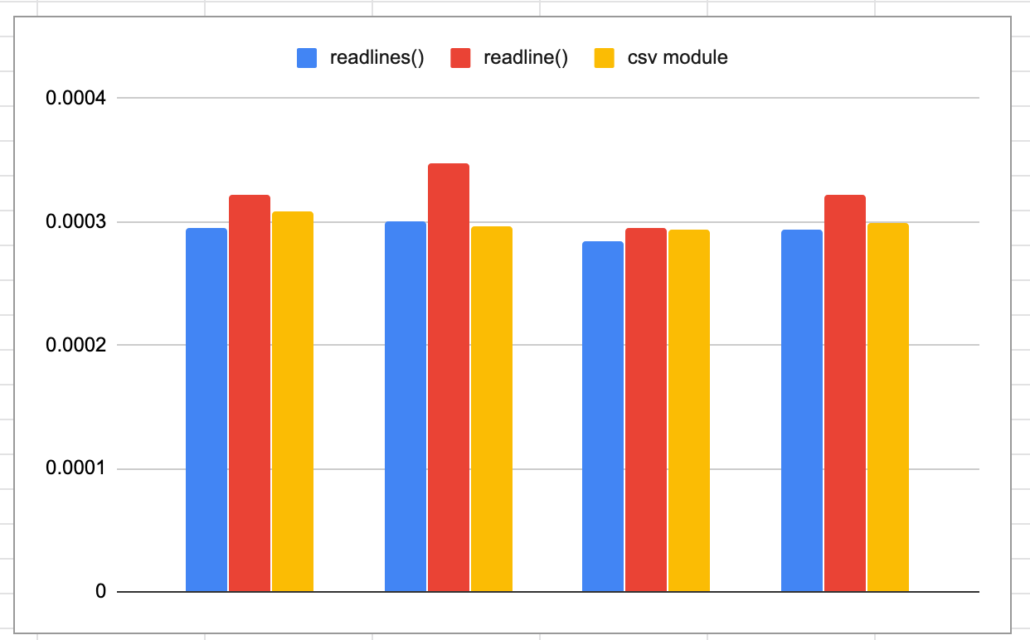

In Part 1 of my laborious journey from Python to Scala, I did some work with file operations, CSV files, and messing with the data. It took me a little longer then I expected to wrap my head around the Scala functional/object/immutable approach to software design. But, in the end if felt satisfying and I’m starting to be a convert. Scala makes you think a little harden then Python, is less forgiving, and requires more of you as the developer. In part deux, I figured the next topic to grapple with some simple retrieval of remote files and writing those files to disk. Also, I wanted to take a crack at Classes in Scala.

Read more