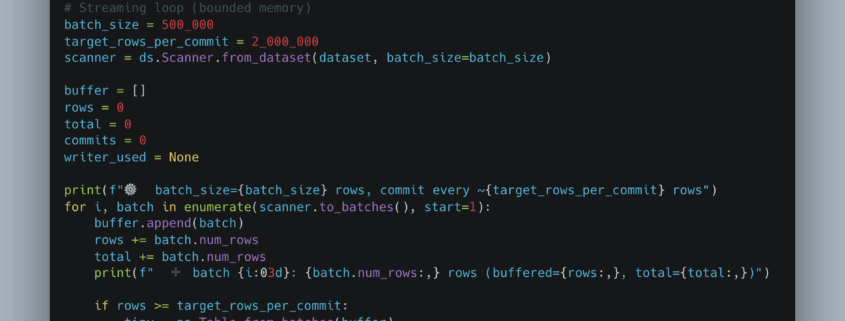

Over the last few years, I’ve found myself using PyArrow more and more for everyday data engineering things. Data ingestion, reading, and writing from various data sources and sinks. Most of us are familiar with Arrow and how it underpins a lot of new tech like DataFusion, and Arrow is used as an internal memory format.

But, small and mighty though it might be, the pyarrow Python package is a force to be reckoned with. Capable of blasting through all sorts of cloud-based datasets. It’s not particularly a data transformation framework, as much as a way to represent core datasets, transferring data hither and thither over the wire from one format to another.